Audio indexation

From HLT@INESC-ID

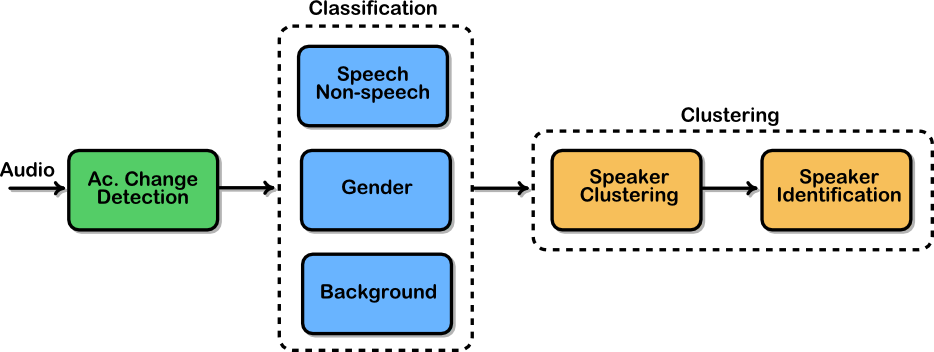

Broadcast News media monitoring is an important technology but poses a number of difficulties and challenges for speech processing both in terms of computational complexity and transcription accuracy. In this kind of application the speech signal not only has to be transcribed but also characterized in terms of acoustic content. Most present day transcription systems perform some kind of audio characterization (segmentation and labelling) as a first step in the processing chain.

Audio pre-processing offers some practical advantages: no waste of time on the processing of non-speech intervals, no need to process very long speech chunks, facilitation of gender or speaker dependent acoustic model selection during recognition. On the other hand, indexing errors may cause extra transcription errors, if a speaker change is hypothesized in the middle of an utterance, or even worse, in the middle of a word.

To accomplish this audio characterization the first stage in our media

monitoring system is an audio pre-processor. This pre-processor is

responsible for

- Segmentation of the signal into acoustically homogeneous regions

- Classification of those segments according to speech and non-speech intervals, background conditions, speaker gender

- Identifying all segments uttered by the same speaker

The segmentation provides information regarding speaker turns and identities allowing for automatic retrieval of all occurrences of a particular speaker. The segmentation can also be used to improve performance through adaptation of the speech recognition acoustic models. Additionally the final transcriptions enriched by the pre-processing information are somewhat more human readable.